I’ve often referenced the notion of the “threat landscape” – the physical and digital threats facing asset holders. Most commonly, we see disparate $5 wrench attacks and phishing schemes that target people rather than protocols. But over the last 90 days, the threat landscape materially transformed from a series of skirmishes into full-scale siege warfare.

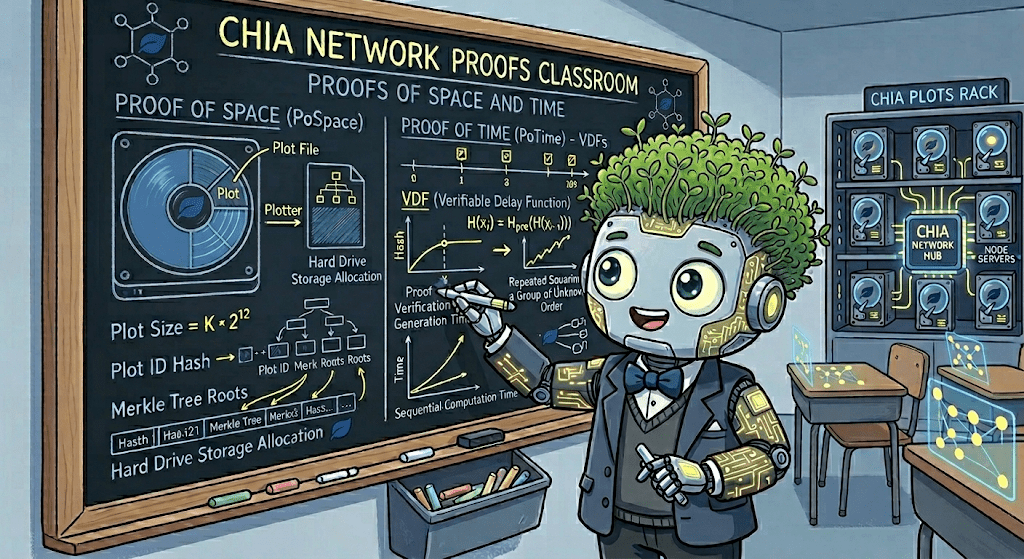

For the last three months, the Chia testnet has been under a relentless, 24/7 barrage. We haven’t just been seeing more security researchers; we’ve been seeing augmented ones. We are currently witnessing the dawn of the LLM powered attacker. Security researchers (and some less-than-friendly actors) are using AI tooling to fuzz our code, probe our consensus, and stress test every line of Chialisp we’ve ever written.

The sheer volume of bounty submissions has skyrocketed. At its peak, we were buried under a mountain of reports (over a thousand in a 6 week period). More importantly, the noise and hallucination of past agentic submissions are gone. In their place are bonafide, credible threats, just waiting for someone to prompt the agent to write a payload for exploitation.

I want to note some of the issues we are patching today were discovered by bug bounty researchers during this wave of submissions. I want to thank all of you who followed responsible disclosure best practices and submitted bounties through our HackerOne portal. We hope to continue seeing high interest from the security researcher community. Without you we surely would not be as secure as we are now.

Fighting Fire with… Math?

When you’re under siege, you don’t just add more walls; you must also build better ways to see from whom and from where.

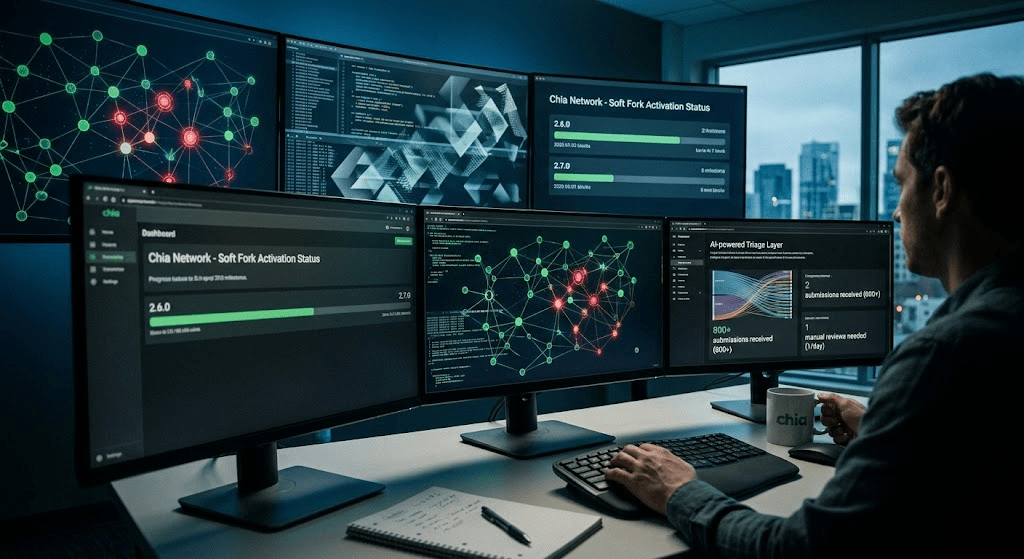

To keep up with the deluge of newfound threats, our security team began training our own internal security AI tools. We realized that we needed a system that could “think” like the researchers on our staff and the researchers overwhelming us, while also grasping the context of our unique coinset model and BLS signature architecture.

We’ve spent the last month refining these models internally. The results have been transformative. This month alone, we received over 800 bug bounty submissions. In the “old days” (about four months ago), that would have paralyzed our engineering team for months. Today, our internal AI triage layers handle much of the heavy lifting, narrowing those 800+ entries down to roughly one “true” positive per day that requires manual human triage.

But something else happened during this process.

Rather than just build a better lens to look at our enthusiastic researchers, we made the decision to point that lens at ourselves. As we turned our new security tooling inward to conduct deep path research on our own codebase, it pointed us toward a series of potential edge cases and security-related issues that had never been surfaced by traditional auditing, manual review, or outside researchers.

So, Why 2.7.0?

This brings us to today. We are releasing Chia 2.7.0.

This is a soft fork release that will activate at block 8,655,000, around April 29/30 – the same block as the soft fork changes in 2.6.0 which was released on February 11.

The goal of the 2.6.0 patch was to clean up the code base a bit in preparation for the hard fork we intend to release with Chia 3.0 and fix some bugs to make our coming product launches smoother as well. Those efforts remain important but we wanted to give the fixes in 2.7.0 just a bit more time to cook, so we have elected to release 2 sets of changes, one set after the other.

By activating at the same time, we feel that both the security concerns and bug fixes from 2.7.0 and the other cleanup from 2.6.0 will provide the safest and most durable environment for consensus going forward.

Effectively, 2.7.0 supersedes 2.6.0. But the changes were distinct enough in function that we felt it prudent to separate the releases for our own quality controls and release process concerns.

Candidly, releasing a soft fork on such a compressed schedule is not our preference. We value a deliberate and steady approach to consensus changes. However, the insights gained from our inward-facing AI research (in addition to the efforts of external researchers) have made it clear that the risk to the chain, and to consensus itself, is simply too large to ignore.

The issues we’ve identified are sophisticated. They aren’t the kind of things a human catches while glancing at a PR; they are the result of machine scale analysis of state transitions and cryptographic edge cases. These updates are critical to the long term resilience of the network.

Our Commitment to Transparency

We are asking the entire community of farmers, node operators, and ecosystem partners to adopt this update immediately.

We aren’t going to ask for your trust without providing the “why.” Once we reach 51% adoption and the network is secured against these specific vectors, we will be releasing full, detailed post mortems for every issue we are patching in this release.

Security is a game of cat and mouse, but the mouse just got a massive intelligence upgrade. We’ve leveled up our own defenses to match, and 2.7.0 is the direct result of that innovation.

Let’s get the network updated. See you on the other side of the fork.